Unencrypted by Default (And Other Dirty Secrets)

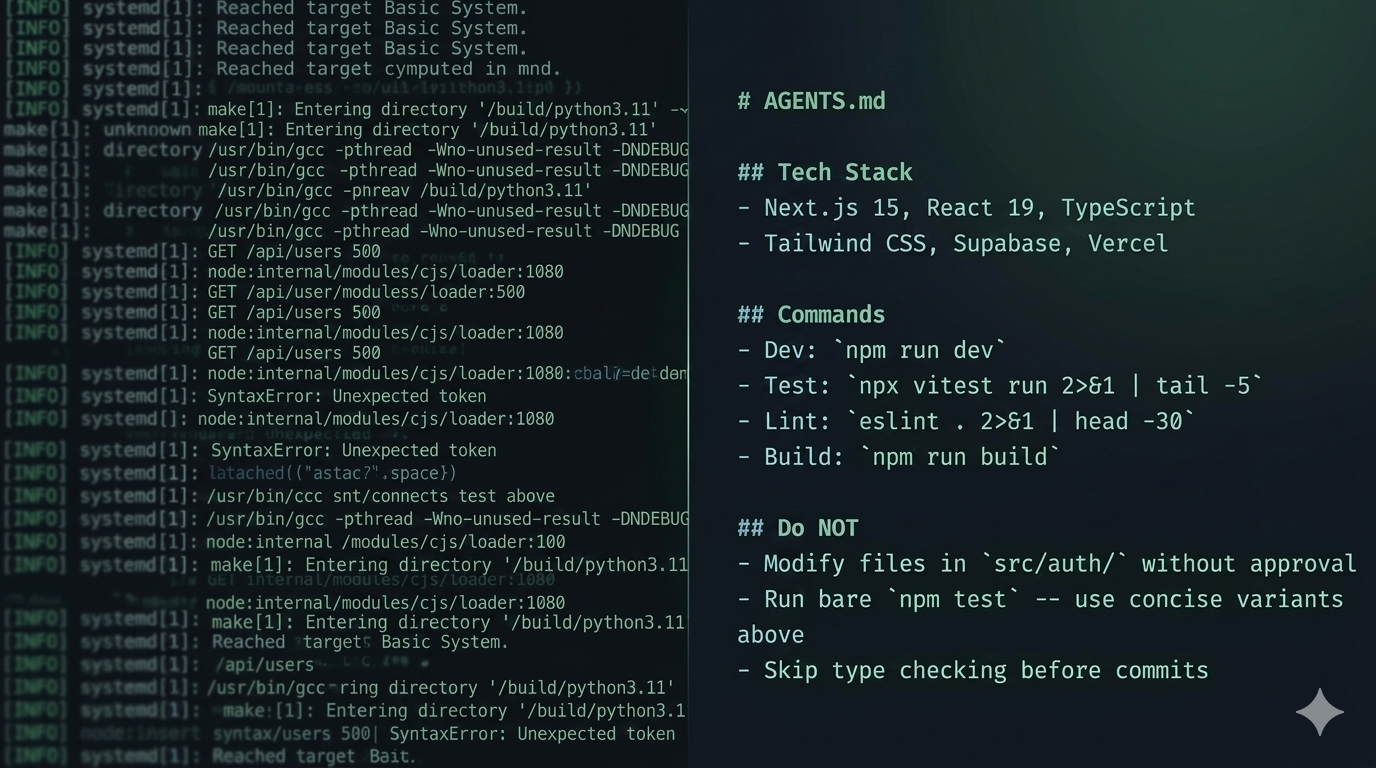

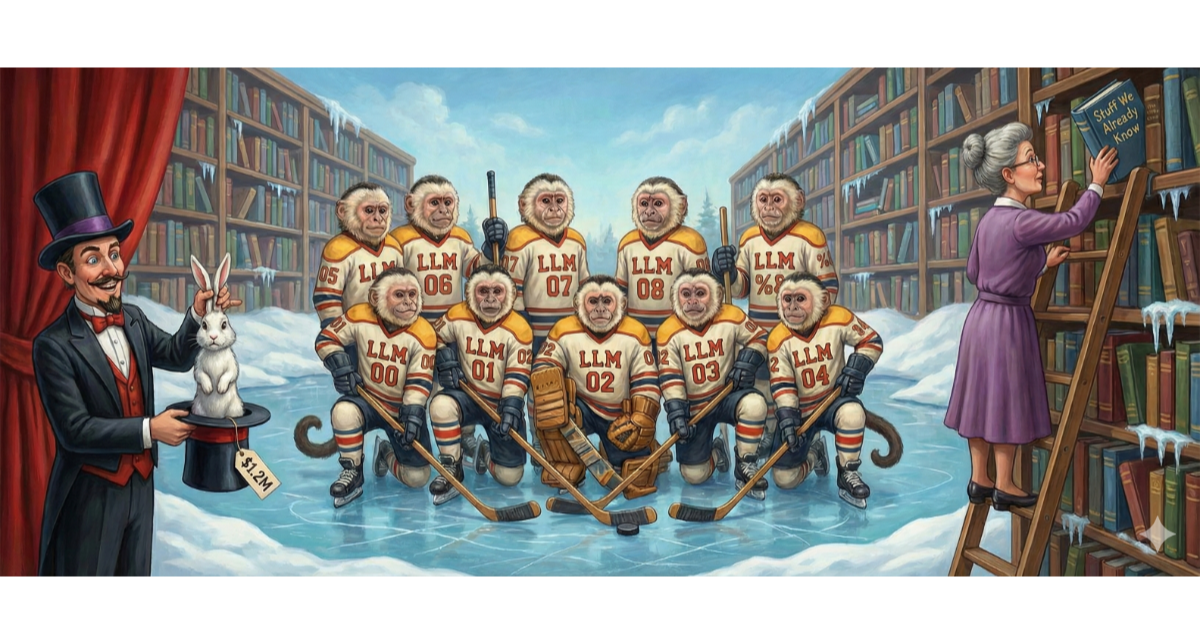

Vercel stored customer environment variables unencrypted unless developers manually toggled a sensitivity flag. When an attacker pivoted through a compromised OAuth token and enumerated those variables, the blast radius wasn't Vercel's data. It was every downstream service those credentials could unlock.

- security

- supply chain

- DevOps

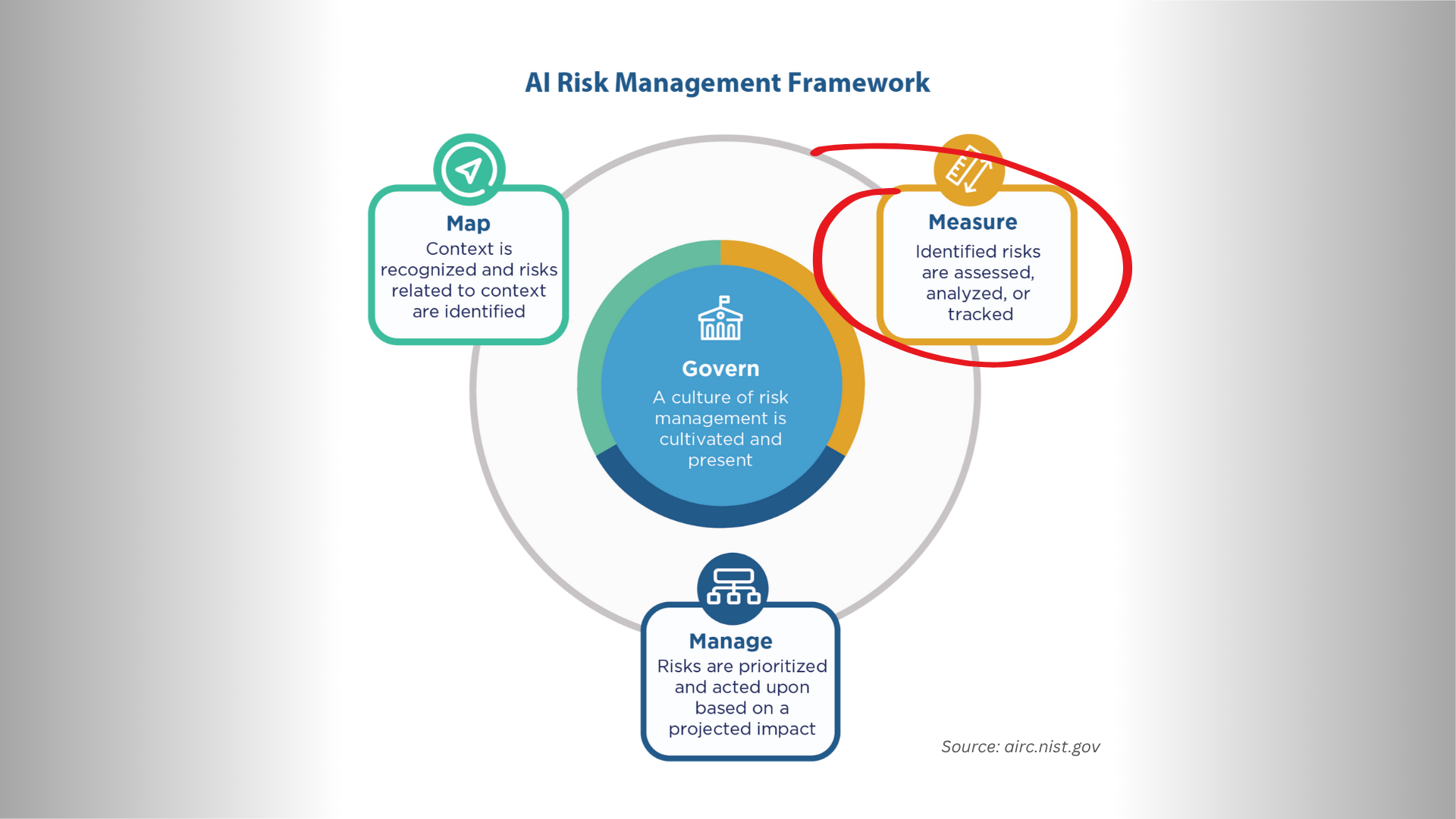

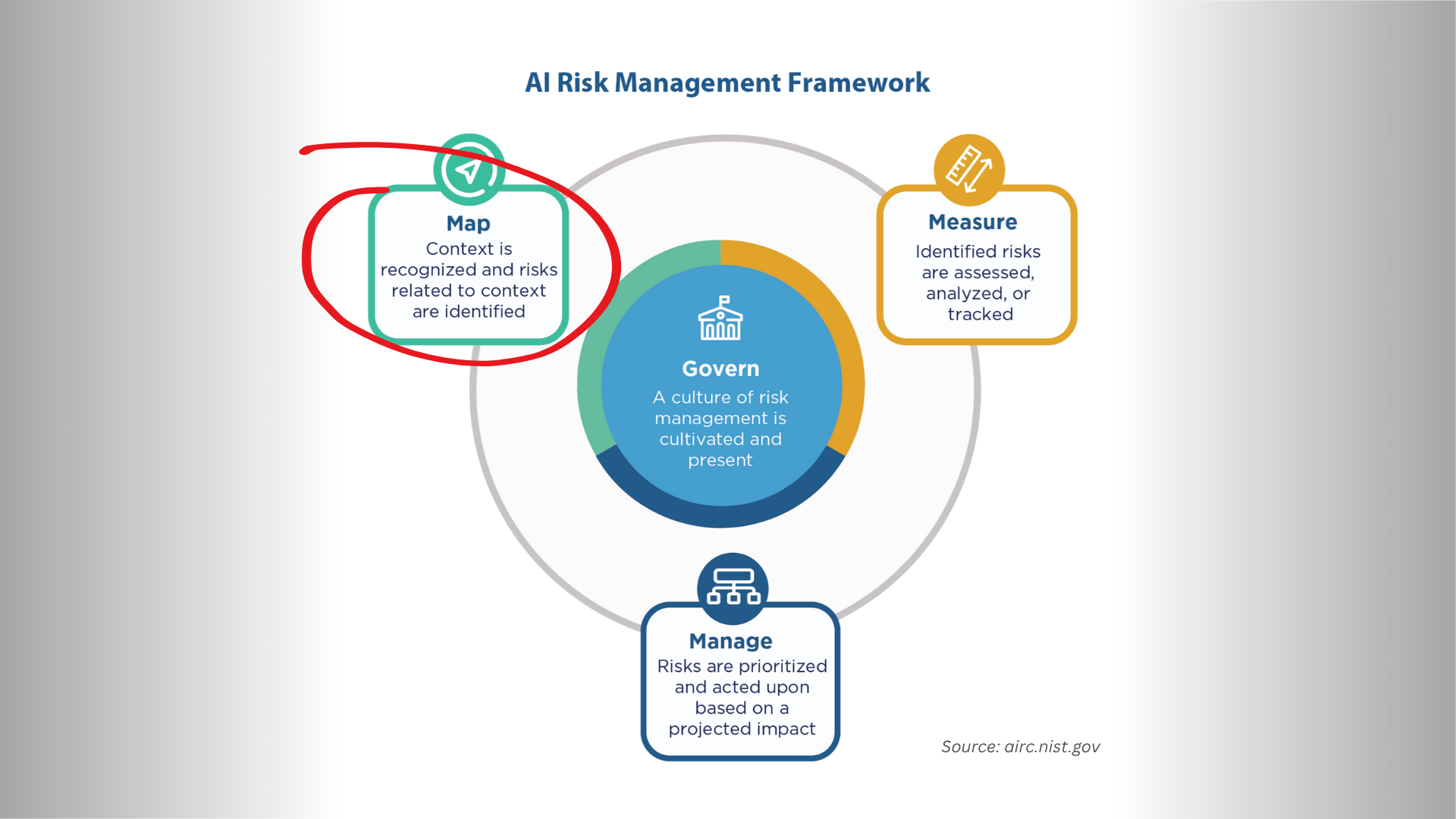

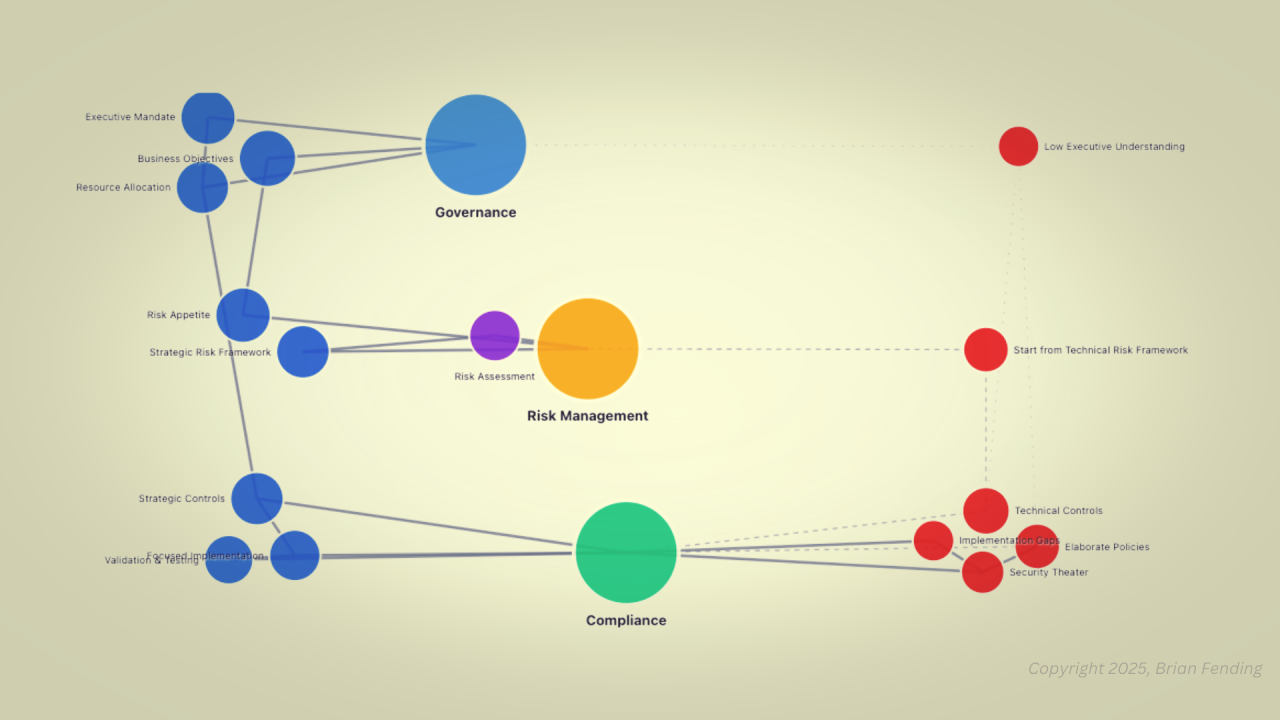

- risk management

- enterprise security

- governance